LLMs Are Already The Next Calculators

I got you, you’re curious. What if they are the next calculator. What’s this guy talking about.

Over the next few sections I’m going to share my—very subjective, very opinionated, very personal—take on why I think LLMs are already the next calculators.

Who Am I

I'm Sid. I've been in industry for over 5 years, working as an infrastructure engineering consultant for the past year. I’ve always worked at some capacity on DevOps / Infra at every role I’ve been at.

1. Screw Remembering Stuff

I’m bad at it. I don’t like it. My least favorite subjects were always Biology and Chemistry. Not because that content was not exciting - it was because I had to memorize so many seemingly random things.

I’m not afraid to admit it - I’m not good at remembering things. But part of life is understanding your weaknesses. If a great plumber didn’t have a plunger - does that make them a bad plumber?

And it seems like this is a common problem. Modern memory research suggests that human memory is reconstructive, rather than a video recording; we tend to remember the 'gist' or general meaning of an event while quickly losing specific details or verbatim facts (Lacy & Stark, 2013).

Let me bring notes and write things down. The barometer can be speed, ability to quickly decide - but making the barometer arbitrary things to remember (cough cough leet code) no longer makes sense. It's not the bottleneck it used to be.

With the invention of the calculator, we were able to free ourselves from the burden of remembering times tables and other arithmetic. We could now focus on the more important things - like how to properly use the calculator.

And just like the calculator can give you incorrect results if you don’t use it correctly, an LLM won't give you the best results if you don't give it the best context. But when you do, it becomes a calculator of complex information that you no longer need to struggle to store in your own memory.

2. Stop gatekeeping the hard parts

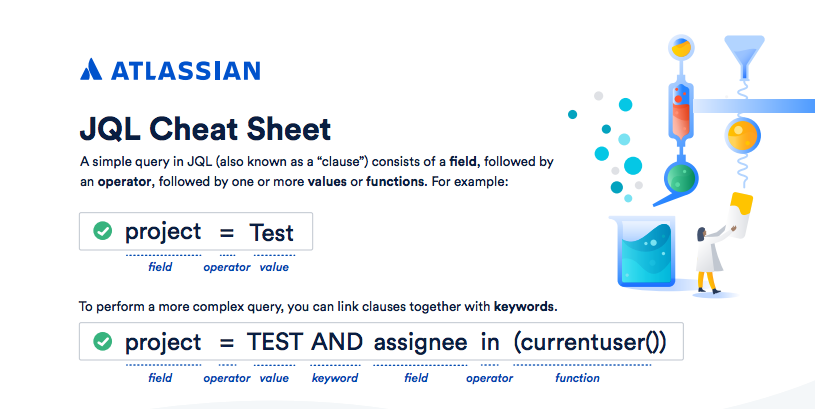

Picture a complex technical database. A company, FooBar Inc., ships FBQL (FooBar Query Language). It’s powerful—it can do what you want—if you already know how to express it.

Now, pick any real-life, serious query language: it starts opaque, then documentation improves, patterns emerge, and fundamentals become teachable.

Ask yourself: is writing the query still the hardest part? Your knee-jerk answer might be no—you’ll say: people spent years building an efficient, beautiful engine that traverses huge data in milliseconds—that was the hard part.

At first, I’d agree. Ingesting and traversing data at scale was the core difficulty.

Then zoom out twenty years. Priorities shift. What was hard becomes table stakes. We move absurd amounts of data every day—I can stream video, push a container, and keep multiple editor tabs busy from my phone's hotspot. The spectacle is easy to take for granted.

That’s a long way of saying: the frontier moves. There will always be mission-critical systems where performance and correctness are everything—but there’s also a huge class of work that’s non–mission-critical and human-in-the-loop, where the old, high barrier to entry model of pouring over documentation and learning the ins and outs of a new language stops making sense.

Think of questions like:

How many customer tickets did we have last week?

You shouldn't need to learn FBQL to answer that. An LLM that understands your schema can translate plain English into the right query—and suddenly the high barrier to entry evaporates.

3. Repetitive work is boring

Some people find repetition relaxing—if that’s you, I say jump to point 4.

I’m not talking about giant refactors (though help there matters too). I mean the fundamental stuff models are getting good at.

Similar to point 2 - it's likely that many of you reading are not re-inventing the wheel. Think of the core things that make up an app:

- Authentication: You would be crazy to build your own - you are definitely going to trust someone else

- Database: Literally the same as Authentication

- Networking (DNS, CDN, etc): Again, exactly the same thing

In most cases, we are all building the same things. The problems are more niche - complex in implementation. But the tech behind these problems has generally become fundamental. Not to take away from our heroes on the front lines of these often open source projects - their work is invaluable. But at the same time, there are other challenging problems out there that require a different set of skills.

As communities mature and tools proliferate—and as models improve at the kinds of tasks humans are “generally” good at—this shift to using LLMs for that boiler plate, repetitive work feels inevitable, and frankly a natural progression.

The “shadcn-ification” of the frontend

If you’re not familiar: shadcn/ui is an enormously popular way to assemble buttons, dialogs, typography, and the rest of a coherent design language. I’ve lost count of how often I’ve recognized those patterns in the wild.

It’s not bad—it often looks great—but it’s recognizable. LLMs are also amazing and working with it due to how popular it is. The suite keeps getting bigger and better, and the community (and the LLMs trained on those communities) are growing in parallel. These are exactly the sorts of non–mission-critical, human-in-the-loop workflows where the first wave of AI changes the economics the fastest.

Frontend is incredibly important—but it’s not the same class of problem as backend and infrastructure reliability. We’ll keep pushing automation “up the stack” over time.

4. I am literally a superhuman

Remembering 5 × 35 matters. If you can do 234 × 3,810 in your head right now, you might be a magician.

That’s the world before the calculator.

In the same way, my ability to context-switch has gone up—as long as I keep the fundamentals straight, I can jump between projects, skim a README, or re-enter a thread without paying the full “mental recomputation tax.”

If I can keep 3 threads warm, and can avoid the busy work that is re-inventing the button component, I have theoretically just done 3 times the work. If you can extend this to slightly more complicated tasks - your barrier is now your own ability to context switch and review.

If you can break down tasks into common fundamentals (shout out point 3), you can then coach a few agents to do things in parallel, while you still have time to review. Asking it to do the whole tasks is bold, as was asking a calculator to remember common formulas or constants. But to ask it to do a set of pre-defined known tasks is much more reasonable and effective.

Maybe more importantly: it helps you jump back into context.

Picture this: You’re about to leave for vacation - you tell yourself - I’m going to come back to this first thing when I get back, it’s almost done!

Now picture this: You’re back - you hate it here. All you want to do is go back on vacation.

The ability to jump back into that project - and ask questions about it (e.g. README.md / AGENTS.md that you’ve been maintaining) allows you to quickly jump into context without having to reorient yourself.

Why read documentation that covers points A-Z, when you are really only confused about C-F. Targeted - personalized interactions allow you to spend your time most effectively.

This ones a bit of a stretch - but I have to imagine this is how people felt when the calculator became common.

Conclusion

It’s a calculator. If you expect it to “know” the gravitational formula on day zero with no guidance, you’ll have a bad time. If you bring the formula, you save a ton of time.

It’s happening faster than we like to admit—I’d be surprised to meet someone in tech who has never used an LLM to draft or fix a SQL query.

In the tech space (for now) it's like not using a calculator. And that's why…LLMs are already the next calculators.